In the glossy open-plan offices of Anthropic’s San Francisco headquarters, CEO Dario Amodei looks uncharacteristically optimistic. Known for his cautious approach to artificial intelligence development, Amodei’s recent enthusiasm about AI interpretability marks a significant shift in tone that’s sending ripples through Silicon Valley and beyond.

“We might actually crack this thing open before it’s too late,” he tells me, referring to what tech insiders call AI’s “black box problem” – the inability to understand exactly how modern AI systems make decisions. It’s a rare moment of optimism in a field often dominated by doomsday predictions.

Just days after Amodei’s blog post on interpretability went viral among tech circles, the atmosphere at a government technology summit in Washington DC couldn’t be more different. There, officials from the Trump administration are questioning whether diversity initiatives in AI development constitute “political bias” – creating what many researchers describe as a chilling effect on efforts to make AI more fair and representative.

These two storylines – the technical push for transparency and the political battle over AI’s social impact – are colliding at a crucial moment in AI’s evolution. As we enter May 2025, the technology that powers everything from your smartphone assistant to hospital diagnostic systems is becoming both more powerful and more controversial by the day.

LOOKING INSIDE THE MACHINE

Anthropic’s recent focus on interpretability isn’t happening in isolation. The company behind Claude 3.7, one of the world’s most advanced AI assistants, is responding to growing concerns about the “black box” nature of modern AI systems.

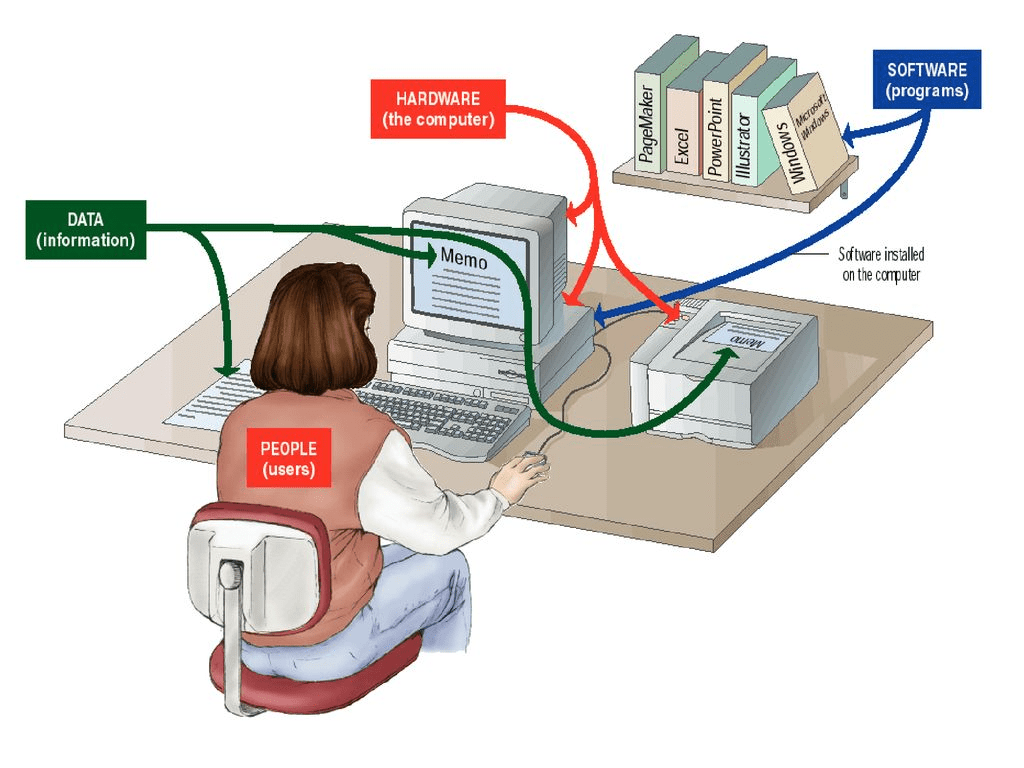

“Most people don’t realize how fundamentally different AI is from traditional software,” explains Dr. Leila Hassan, an AI researcher not affiliated with Anthropic. “When programmers write normal computer code, they know exactly what it’s doing. With neural networks, we create systems that essentially program themselves by finding patterns in massive amounts of data.”

The result is AI systems that can write essays, create artwork, or diagnose diseases – without their creators fully understanding how they reach those conclusions. This opacity has increasingly worried experts as these systems become more powerful and more integrated into daily life.

In his widely-circulated blog post, Amodei described the situation bluntly: “Many of the risks and worries associated with generative AI are ultimately consequences of this opacity.”

Related Posts

What makes Amodei’s recent statements noteworthy is his growing confidence that this problem might be solvable before AI reaches what he terms “an overwhelming level of power” – a significant change in tone from previous industry assessments.

“Two years ago, most experts thought true interpretability was decades away, if possible at all,” says technology analyst Maya Patel. “The fact that Anthropic is now prioritizing this suggests they’ve seen promising research breakthroughs they haven’t fully disclosed yet.”

These breakthroughs come at a crucial moment. Recent models like GPT-5 and Claude 3.7 have demonstrated capabilities that surprised even their creators – from sophisticated reasoning to what some observers characterize as signs of emergent planning abilities.

“We’re seeing systems that can handle increasingly complex tasks with minimal instruction,” says Hassan. “That’s amazing from a capability standpoint, but terrifying from a safety perspective if we don’t understand how they’re making decisions.”

POLITICS ENTERS THE CHAT

While technologists push for greater transparency, political forces are simultaneously reshaping how AI systems are developed and deployed.

According to reporting by the Associated Press, the Trump administration has launched investigations into AI companies’ efforts to address bias in their systems – particularly initiatives designed to ensure AI treats all users fairly regardless of race, gender, or other characteristics.

These investigations represent an extension of the administration’s broader pushback against corporate diversity, equity, and inclusion (DEI) programs, now reaching into the technological sphere.

“There’s a fundamental disagreement about what constitutes ‘bias’ in these systems,” explains political analyst James Chen. “One perspective sees addressing documented disparities as correcting flaws; another sees these interventions themselves as introducing political bias.”

The shift has raised serious concerns among researchers who have documented how uncorrected AI systems can perpetuate or amplify existing societal biases.

Harvard University sociologist Ellis Monk, whose work on colorism led to improvements in how Google’s AI image tools represent diverse skin tones, expressed uncertainty about the future of such work in the current political climate.

“The data is clear that without specific interventions, these systems often perform worse for certain demographics,” Monk noted in a recent interview. “The question is whether we consider that a technical problem to solve, or accept it as an inevitable reflection of society.”

Industry insiders, speaking on condition of anonymity, report growing confusion about regulatory expectations. “Companies are caught between contradictory demands,” said one AI ethics researcher at a major tech firm. “Academic experts and many users want systems that minimize harmful biases, while political pressure increasingly frames those same efforts as ideological.”

REAL-WORLD CONSEQUENCES

The stakes of these technical and political battles extend far beyond abstract philosophical debates.

Jennifer Liu, a small business owner in Phoenix, discovered this firsthand when applying for a business loan last month. “The bank used some AI system to evaluate my application, and it got rejected with no real explanation,” she tells me. “When I finally spoke to a human loan officer, they couldn’t explain the decision either. They just said ‘the system scored you too low.'”

Liu’s experience illustrates the real-world impact of AI opacity – and why interpretability matters beyond technical circles. Without understanding how AI systems reach conclusions, it becomes nearly impossible to appeal or correct unfair outcomes.

Similar stories are emerging across healthcare, employment, housing, and criminal justice – all areas where AI increasingly influences life-altering decisions.

Dr. Robert Jackson, who researches healthcare algorithms at Johns Hopkins University, points out that interpretability isn’t just about fairness – it’s also about safety.

“If an AI suggests a medical treatment, doctors need to understand the reasoning behind that recommendation,” he explains. “Without that transparency, we’re asking medical professionals to trust systems they can’t fully evaluate.”

THE COMMERCIAL BATTLEGROUND

Against this backdrop of technical innovation and political tension, market competition is intensifying. OpenAI recently announced that ChatGPT now helps users find products online through natural conversation, with “over 1 billion web searches just in the past week” according to company statements.

This move signals OpenAI’s ambition to compete directly with Google in the lucrative search and e-commerce referral markets. The updated ChatGPT allows shoppers to find and compare items through conversation, then connect to merchants for purchases – initially focusing on fashion, beauty, and home electronics.

Google has responded by integrating its Gemini assistant directly into search results, providing AI-generated answers above traditional website links – a significant redesign of the search experience that has dominated internet navigation for over two decades.

“We’re seeing a fundamental shift in how people interact with information and products online,” says e-commerce analyst Sofia Rodriguez. “These AI systems are positioning themselves as trusted intermediaries between consumers and businesses – which raises the stakes for both transparency and fairness.”

THE ROAD AHEAD

As May 2025 unfolds, these competing forces – the technical push for interpretability, political battles over bias, and commercial competition for market dominance – are reshaping AI development in real time.

For everyday users, the consequences may not be immediately visible but will profoundly shape future interactions with technology. Will AI systems become more transparent and accountable? Will efforts to address algorithmic bias continue or face political obstacles? Will consumers understand how AI shapes the information and options presented to them?

Back at Anthropic’s headquarters, Amodei remains cautiously optimistic about the interpretability challenge. “This isn’t just a technical problem – it’s about making AI systems that people can genuinely trust and understand,” he says. “That’s going to require progress on both the scientific and social fronts.”

As AI systems grow more powerful and more integrated into daily life, that trust may be the most valuable commodity of all. For now, the race is on to peek inside the black box before it becomes too powerful to question.