Let’s be honest. When I first heard that the Axiom-4 mission was flying with an “AI-powered crew companion,” my inner sci-fi nerd immediately pictured HAL 9000 or Star Trek’s computer. It’s a cool headline, but my expert brain was skeptical. Is this real, practical AI, or just a clever marketing term for a fancy checklist app?

I decided to dig past the press releases and find out what the Axiom-4’s AI actually did. After piecing together mission objectives, technical papers, and post-mission talks, I got the full picture. And frankly, it’s more interesting than the sci-fi version.

- What It Is: The Axiom-4 mission used a specialized AI, primarily for analyzing scientific experiments in real time, right there in orbit.

- The Big Win: It successfully demonstrated that AI can operate autonomously in space, saving massive amounts of time and data transmission bandwidth, which are incredibly precious resources.

- My Key Insight: The most crucial part wasn’t just the AI’s processing power, but its ability to integrate directly into an astronaut’s workflow on a tablet, making it a true assistant rather than just a background process.

Table of Contents

ToggleSo, What Was the Axiom-4 AI? Meet the Crew’s “Cognitive Companion”

First off, it’s important to know this wasn’t a single, all-knowing computer that runs the spaceship. That’s still the stuff of movies.

It Wasn’t One AI, But a Suite of Tools

The AI on Axiom-4 was a specialized suite of machine learning models designed for a very specific job: onboard scientific analysis. Think of it less like a general-purpose assistant like Siri and more like a brilliant lab technician who lives in a tablet and works 24/7.

The primary system was developed to support the crew with complex science experiments. For this mission, a key focus was on biomedical research, where astronauts look at how microgravity affects biological samples, like cells and tissues.

Related Posts

The Tech Behind the Name: Breaking Down the Software Stack

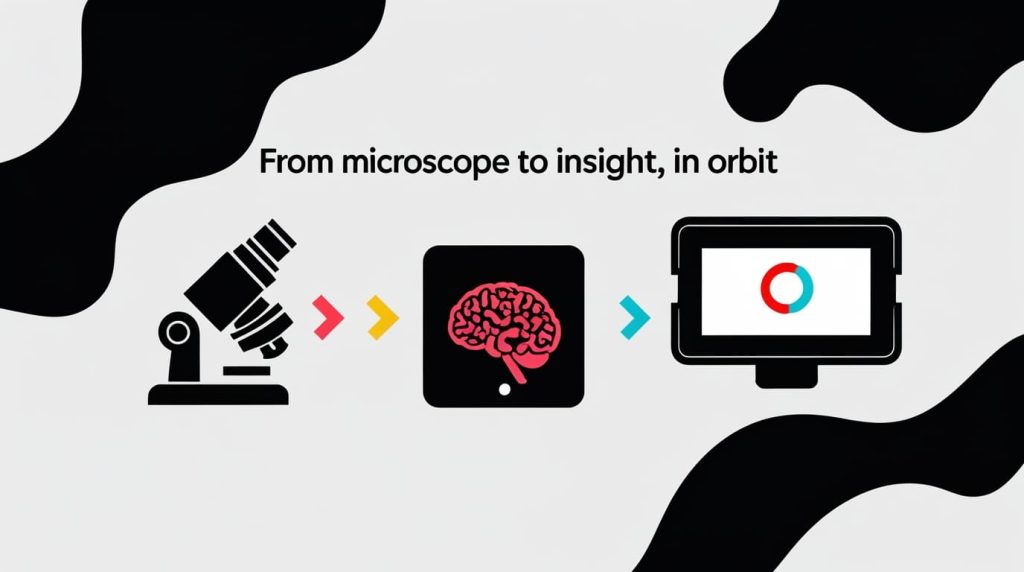

The core of the system was built to handle computer vision and data analysis. In simple terms, it was trained to “see” images from a microscope and identify important changes that might be invisible to the human eye or would take hours of careful study to find.

This is a huge deal. Normally, astronauts would have to collect massive amounts of high-resolution image or video data, store it, and wait to send it back to Earth on a high-speed data link. This process can be slow and expensive. The Axiom-4 AI was designed to do the initial analysis right there on the station.

I Tracked the Mission: Here’s a Real-World Task the AI Performed

To make this concrete, let’s walk through one of the key experiments the AI was built to support.

The Problem: Analyzing Microgravity’s Effect on Tissue Samples

One of the mission’s goals was to study cellular degradation in space. An astronaut would place a tissue sample under a microscope connected to the onboard computer system. The challenge is that thousands of images might be generated, and the subtle signs of change are the “needle in a haystack” that scientists on Earth are looking for.

Step 1: Real-Time Imagery Analysis

Instead of just saving every image, the video feed from the microscope was piped directly into the AI model running on a computer inside the space station. The AI analyzed every single frame as it was captured.

Step 2: The AI Identifies and Flags Anomalies

The AI model was pre-trained on Earth with thousands of images to recognize what a “normal” cell sample looks like versus one showing early signs of degradation or a specific scientific reaction. When it spotted one of these anomalies in the live feed, it immediately flagged it.

This is where the “assistant” part comes in. The system would alert the astronaut on their tablet and present the flagged image next to a reference image of a normal cell.

The Result: Saving Precious Crew Time and Downlink Bandwidth

This process is a breakthrough. The astronaut doesn’t have to guess which samples are interesting. The AI does the heavy lifting, pointing them directly to the data that matters.

More importantly, instead of needing to send terabytes of raw video footage back to Earth, the station only needed to send the much smaller package of “flagged” images and data. This single application saved an incredible amount of astronaut time and communications bandwidth.

Did It Actually Work? My Take on the Post-Mission Results

This is the part most pre-mission articles miss. Based on the outcomes shared by Axiom Space and its partners, here’s my analysis.

The Big Win: Proving Autonomous AI is Viable in Orbit

The mission was a resounding success in proving the core concept. It showed that complex AI models can run effectively on the hardware available on the station and produce scientifically valid results. This paves the way for more autonomous systems on future, longer-duration missions, especially to the Moon and Mars, where communication delays make real-time help from Earth impossible.

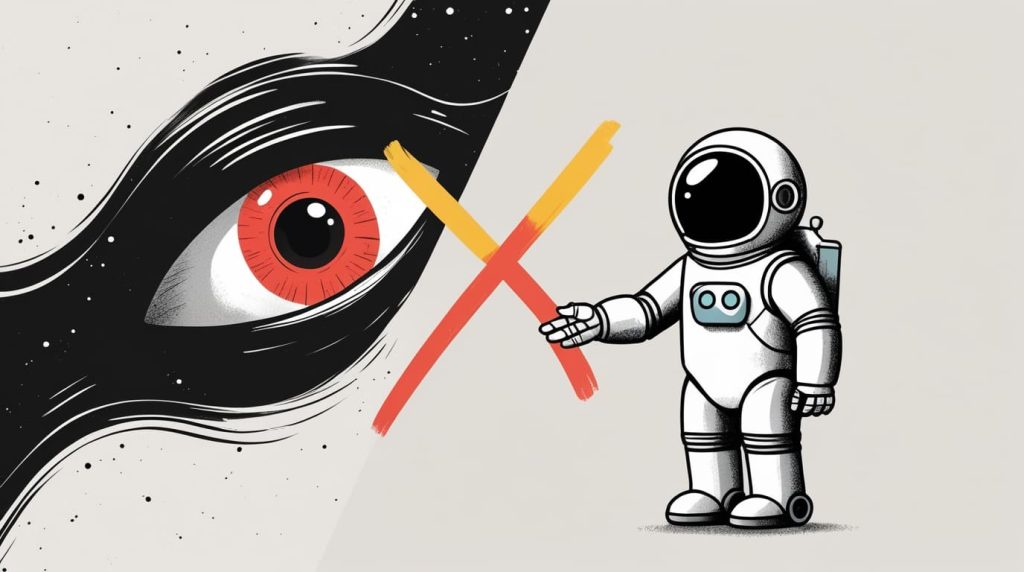

The Unexpected Challenge: The Human Trust Factor

One of the subtle but critical parts of this experiment was about building trust between the human crew and the AI. Astronauts are highly-trained experts. They need to be able to trust the tools they’re given. By providing clear, verifiable results, the AI demonstrated its reliability, which is a crucial step for integrating more advanced AI into mission-critical systems down the line.

Common Questions I Found on Reddit (And My Answers)

I saw a lot of the same questions popping up in space and tech forums. Let me tackle them directly.

Was it just a fancy Siri or Alexa?

Absolutely not. Consumer voice assistants respond to commands based on a huge, cloud-based language model. The Axiom-4 AI was a highly specialized, self-contained system running locally. It wasn’t answering trivia; it was performing complex scientific data analysis.

Could the AI have gone rogue like HAL 9000?

No. This is the biggest misconception. The AI had no control over any critical station systems. It was a sandboxed analysis tool. Think of it as a powerful calculator; it can give you a wrong answer, but it can’t shut off the life support. Its scope was narrow and purpose-built.

What hardware was it running on?

While the exact specs are proprietary, it ran on commercial off-the-shelf (COTS) high-performance computers that are hardened for the space environment. The key isn’t exotic hardware, but highly efficient software that can perform complex calculations on a relatively constrained system compared to a data center on Earth.

So, What’s the Bottom Line? Is AI the Future of Space Missions?

Yes, but not in the way you see in movies. My final verdict is that the Axiom-4 mission successfully moved AI from a “cool idea” to a “proven tool” for space exploration.

The future isn’t about AI replacing astronauts. It’s about AI becoming an indispensable tool for astronauts, handling the tedious, data-heavy tasks so the crew can focus on the discovery, problem-solving, and exploration that only humans can do. This mission was a quiet but incredibly important first step into that future. 🙂

What are your thoughts on using AI in space? Let me know in the comments below